Hardware RAID boxes are cool things. Plug them in and they behave like a big and fast disk. If properly configured, they'll be another 30% faster.

There is great software RAID support in Linux these days. I still prefer having RAID done by some HW component that operates independently of the OS. This reduces dependencies a great deal and takes load of the server.

Currently, my favorite hardware RAID configuration is rack-mountable servers with lots of disk bays, an 8 or 16 port Areca controller, and all configured as a large RAID 6 device.

So far, so simple, even without any optimization, performance is quite convincing. But surely there must be some things you can do to improve on that.

RAID5 and RAID6 work by striping the data across multiple disks and writing parity information such that the data can be recovered when a disk breaks. This means, that even when writing a small amount of data, the parity information has to be updated for every write. Small updates are therefore not very effective on a RAID5/6 configuration. The optimal amount of data to be written to the system in one go is defined by the 'stripe-size' of the RAID configuration.

By working with 'stripe-sized' chunks of data you can help the RAID to work to its best performance. Often the stripe-size is 64 KByte, this means that everything should be aligned to 64 KByte.

Hard disk partitions in the PC world are normally aligned to hard disk tracks. This made perfect sense about 1'000 years ago, when this information had something to do with the physical layout of a hard disk. These days, though, cylinders, tracks and heads are pure fantasy, carried along for the benefit of DOS compatibility. Ever wondered what this disk would look like, physically?

243152 cylinders, 255 heads, 63 sectors/track

Back in the good old days, people where being cautious when partitioning their disks and left the first track blank. And even today, the first partition generally starts at the second track

# sfdisk -l -uS /dev/sda

Disk /dev/sda: 243152 cylinders, 255 heads, 63 sectors/track

Units = sectors of 512 bytes, counting from 0

Device Boot Start End #sectors Id System

/dev/sda1 * 63 996029 995967 83 Linux

[...]

This is quite bad if this partition is on our shiny new 64KByte striped RAID box. Now everything will be shifted 63*512 Bytes out of whack. A sub optimal start for anyone trying to optimize disk access with stripe-size in mind. If your RAID can be configured to use LBA64 addressing, it may be that the disk shows up as

1853941 cylinders, 64 heads, 32 sectors/track

This is still not ideal for putting the first partition on the second track, but if you put the start of the first partition on track 4 (128 blocks) things would look quite good.

Note: some partitioning tools let you put partition boundaries wherever you choose for them to be. They will warn you about DOS incompatibility. But when this is your only choice, don't be intimidated. Linux can deal fine with partitions starting anywhere you put them. Just make sure they are aligned to stripe size.

Best is to avoid the DOS partitioning problem all together by giving your whole (un-partitioned) RAID device to LVM for management. If you need a classic partition to boot your box, split the RAID into two volumes. A small one for the boot partition and a large one for all the LVM space.

LVM allocates disk space in chunks of 4 MByte. This goes very well with 64 KByte stripes.

When you setup a LVM physical volume (PV), it will start off with a metadata area and only behind that metadata area your physical extents (PE) will get allocated. The pvcreate option --metadatasize lets you configure how big that metadata area should be. We found in our case that LVM did by default allocate 192k for metadata (pvs -o pe_start). Since this works well with our stripe size of 64k, so we did not investigate this any further.

With partitions aligned, it is now the file systems turn. The Linux ext3 file system can be tuned to a stripe size by setting the "stride". The stride is configured in number of blocks. An ext3 block is 4 KByte, meaning we need a stride of 16 for stripes to match up:

mkfs -t ext3 -E stride=16 /dev/local/lvm_volume

And if you can somehow manage to have an external journal for your filesystem, this will be the single most effective way to speed up real-world performance. Read my notes on setting up an external journal.

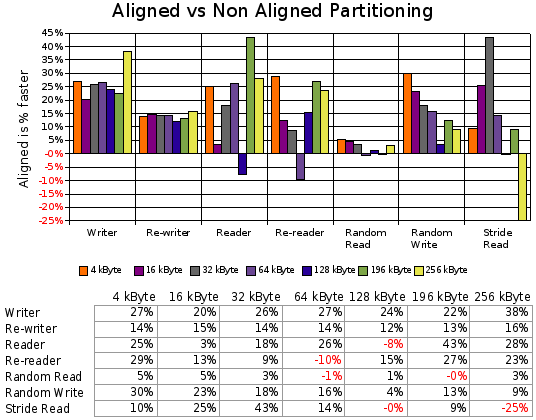

So what is there to gain by properly aligning partitions? I used

IOzone to get some numbers. To see what

performance we get from the actual RAID system, Linux has been booted

into single user mode with the mem=256M parameter. This limits

available memory to 256 MByte, effectively minimizing the effects of

the buffer cache (we want to measure disk performance and not memory

performance after all).

I had IOzone perform several tests at various record sizes, always with a total file size of 2 GByte. The partition with the test data was unmounted in between tests, to flush the (remaining) buffer cache:

iozone -s2G -j 16 -r4k -r16k -r32k -r64k -r128k -r196k -r256k \

-R -i 0 -i 1 -i 2 -i 5 -i 8 \

-f /scratch/iozone.tmp -U /scratch

The results show a performance increase of up to 30% by properly aligning partitions to RAID stripes. Especially write operations improve dramatically since fewer parity blocks must be written. As with all benchmarks, the real test is your real world usage pattern, so take this performance numbers with a grain of salt.

As Linux comes out of the box, the Linux kernel does not expect to work with a single large disk of several TByte in size. A few tuning parameters come to rescue. Note though, the effect of these settings is highly dependent on the workload of your machine. Larger numbers are not necessarily better.

First increase the read-a-head of your RAID device. The number is given in kilo Bytes. The default is 128 KByte. We set it to 1 MByte in this example.

echo 1024 > /sys/block/sda/queue/read_ahead_kb

The 2.6.x Linux kernel has seen quite some work put into optimizing disk access by properly scheduling the IO requests. It seems that the queue depth of the device and the scheduler interact somehow. I have not looked at the code, but mailing list evidence suggests that things work better if the device queue depth is lower than the scheduler depth, so:

#default 128

echo 256 > /sys/block/sda/queue/nr_requests

#default 256

echo 128 > /sys/block/sda/device/queue_depth

While we are at it, we also change the scheduler to the powerful 'cfq' version. If your are running RHEL or SuSE this will already be the default:

echo cfq > /sys/block/sda/queue/scheduler

For these settings to become active at boot-time add some start script.

One thing where the people doing the io schedulers are particularly proud of, is their ability to make the system work smoothly even when there are lots of reads and writes going on at the same time. Especially the deadline and the anticipatory schedulers have specialized in this. If you have a HW RAID, this still works fine as long as only reads are concerned. If we are looking at a mix of reads and writes the whole scheme breaks down. Most HW RAID controllers do their own caching internally, they consume write requests at a much higher rate than they can fulfill them. So once the write request has been submitted it sits in the RAID HWs cache and the scheduling of the requests is up to the RAID out of the control of the Linux io scheduler. I have not found a way around this problem yet. If you know more I would be very interested to know.

NOTE: The content of this website is accessible with any browser. The graphical design though relies completely on CSS2 styles. If you see this text, this means that your browser does not support CSS2. Consider upgrading to a standard conformant browser like Mozilla Firefox, Opera, Safari or Konqueror for example.